Sony's "CLEWS" Can Sniff Out Musical DNA: Is the AI Music Gold Rush Already Over?

- Jared F.

- Feb 20

- 6 min read

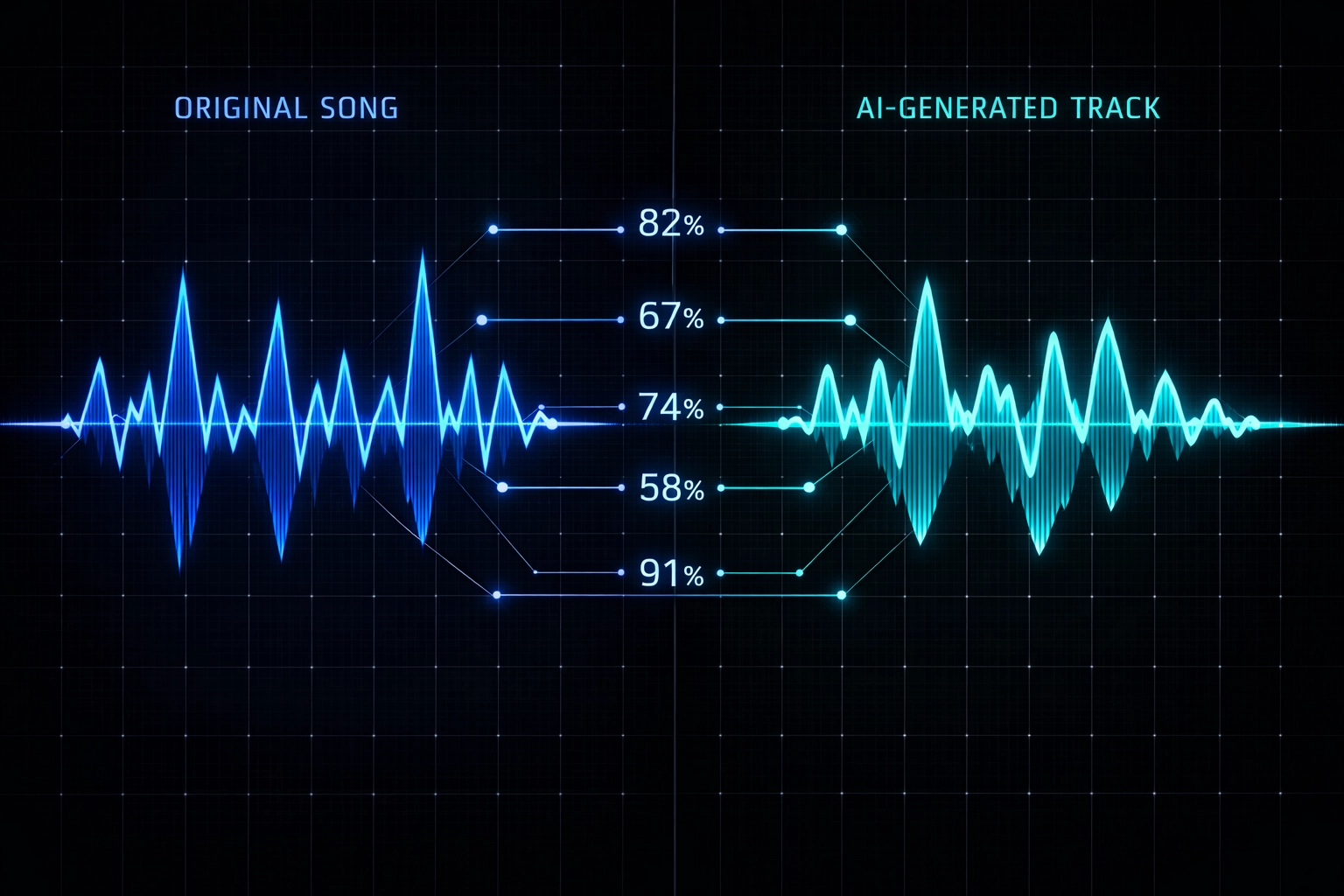

We're witnessing a pivotal moment in the AI music wars, one where the technology designed to protect music might be just as sophisticated as the tech that generates it. Sony Music Entertainment claims it can now detect "musical DNA" inside AI-generated tracks, identifying which original works influenced a synthetic composition down to specific percentage contributions. The tool is called CLEWS, and if it works as advertised, it could fundamentally reshape how we think about AI music attribution, royalties, and the ongoing flood of machine-generated tracks hitting streaming platforms.

But here's the million-dollar question: Is the AI music gold rush already over before it really started? Or are we just entering a more technical, regulated phase where the wild west gets a sheriff?

What the Hell Is CLEWS?

CLEWS, short for Contrastive Learning from Weakly-Labeled Segments, is Sony AI's answer to the attribution problem that's been plaguing the industry since generative models like Suno, Udio, and others started churning out radio-ready tracks. Unlike traditional content ID systems that match exact audio fingerprints, CLEWS operates at a deeper level, analyzing short audio snippets (around 20 seconds each) to map musical relationships between original works and AI-generated output.

Think of it as a bloodhound trained to sniff out melodic contours, rhythmic patterns, chord progressions, and harmonic structures, the fundamental building blocks that make a song sound like it's related to another song, even when it's not an exact copy. This is critical because AI music generators don't sample in the traditional sense. They learn patterns from training data and synthesize new audio that feels influenced by those patterns without directly copying waveforms.

How CLEWS Works:

Snippet-Based Analysis: Instead of analyzing entire songs, CLEWS breaks tracks into 20-second segments, allowing it to catch subtle similarities that traditional matching tools might miss.

Near-Duplicate Detection: The system identifies unauthorized versions, remixes, or "near-duplicates" that slip past conventional fingerprinting.

Training Data Attribution: Sony has developed methods to trace which specific songs from an AI model's training dataset most influenced a generated track, essentially reverse-engineering the model's creative lineage.

Sony employs two approaches here. When AI developers cooperate, CLEWS can connect directly to their base model systems for precise attribution. When they don't, the system compares AI-generated output against Sony's vast catalog to estimate which original works were likely used in training.

The "30% Artist A, 10% Artist B" Problem

Here's where it gets wild. Sony claims CLEWS can identify not just which protected works influenced an AI track, but how much. Imagine an AI-generated song that sounds like it borrowed 30% of its melodic DNA from Taylor Swift, 10% from The Weeknd, and 15% from a Daft Punk chord progression. This kind of granular attribution is unprecedented, and it's the key to solving the royalty distribution nightmare that AI music creates.

Right now, when an AI model trains on millions of copyrighted songs and then generates something "new," there's no clear mechanism for compensating the original artists whose work shaped that output. Traditional sampling royalties don't apply because there's no literal sample. Songwriting credits don't work because the AI didn't "write" in the legal sense. And streaming royalties default to whoever uploaded the track.

CLEWS aims to change that by providing a technical framework for Protective AI, systems designed to safeguard artists' intellectual property in the generative era. If Sony can prove that an AI track contains identifiable musical DNA from specific copyrighted works, it opens the door for licensing agreements, royalty splits, and legal frameworks that ensure original creators get paid when their influence appears in synthetic music.

Does It Actually Work?

The short answer: We don't fully know yet. Sony has published research demonstrating CLEWS's technical capabilities, but no commercial rollout has been announced, and adoption across the industry remains uncertain. Some insiders note that AI music companies "prioritize improving model performance" over preventing intellectual property infringement, suggesting that even if the technology exists, enforcement is another question entirely.

There's also the question of accuracy. Musical influence is subjective, music theory scholars have debated for decades whether a I-V-vi-IV chord progression is universal enough to "belong" to anyone. Can an algorithm truly distinguish between inspiration, coincidence, and infringement? Sony believes CLEWS can, but legal precedent for this kind of attribution is still being written in real time.

The AI Music Gold Rush: Flood vs. Flow

Now let's talk about the elephant in the room: Is there actually an AI music gold rush happening, or are we just witnessing a flood with no real market?

The numbers tell a fascinating story. Millions, potentially tens of millions, of AI-generated tracks are currently flooding digital streaming platforms. Tools like Suno and Udio have democratized music creation to the point where anyone with a keyboard can generate a full song in minutes. The supply side of the equation has exploded.

But here's the reality check: Despite this torrent of AI music, these tracks account for less than 1% of actual streaming consumption. There's a massive gap between creation and consumption, a disconnect between how easy it is to make AI music and how hard it is to get anyone to actually listen to it.

The Numbers Game:

Creation: Millions of AI tracks uploaded monthly to Spotify, Apple Music, YouTube Music.

Consumption: Less than 1% of total streams across DSPs.

The Gap: Easy creation doesn't equal audience engagement.

This suggests that the "gold rush" narrative might be overstated. Yes, the tools are revolutionary. Yes, barriers to entry have collapsed. But the market isn't responding the way some predicted. Listeners still gravitate toward human artists, established brands, and music with cultural context. AI tracks often function as background filler, production elements, or demo templates rather than standalone hits.

Enter the Centaur Producer

This is where the Centaur Producer model becomes relevant. Instead of AI replacing musicians, we're seeing a hybrid approach where human creativity leverages AI tools for specific tasks: generating stems, exploring chord variations, automating tedious production work: while maintaining human oversight and artistic direction.

CLEWS fits into this framework perfectly. If attribution technology can identify which training data influenced an AI-generated track, it enables transparent workflows where producers can intentionally reference specific influences, license them properly, and create new music that respects the original creators' rights.

Imagine a future where a producer uses an AI tool to generate a melody inspired by '90s trip-hop, and CLEWS immediately flags that the output contains 25% Portishead influence. The producer can then decide whether to license that influence, modify the output, or move in a different direction: all before the track ever hits a DSP.

Is the Wild West Ending?

So, is Sony's CLEWS technology the death knell for the lawless AI music era? Not quite: but it's definitely the beginning of the end of the unregulated phase.

The industry is rapidly moving toward a more structured environment where AI music exists within legal frameworks, attribution systems, and licensing agreements. We're seeing this play out in real time:

Suno's Warner Music Group deal (covered in our earlier piece) signals that major labels are negotiating with AI platforms rather than just suing them.

Training data transparency initiatives are pushing AI developers to disclose their datasets.

Protective AI technologies like CLEWS are giving rights holders tools to monitor and enforce their copyrights.

But the wild west isn't disappearing: it's just getting more technical. The question is shifting from "Can we stop AI music?" to "How do we integrate it responsibly?" And that's where tools like CLEWS become essential infrastructure rather than just defensive weapons.

What This Means for Artists and Producers

For creators, the CLEWS era represents both opportunity and accountability. On one hand, attribution technology means your influence could be recognized and compensated even when AI models reference your work indirectly. On the other, it means the days of uploading AI-generated tracks without considering their training data lineage are numbered.

Key Takeaways:

Attribution is becoming automated: Tools like CLEWS will likely be integrated into DSP upload processes, flagging potential copyright issues before tracks go live.

Licensing frameworks are emerging: Expect to see new royalty models that account for "influence percentages" in AI-generated music.

Transparency is the new standard: Knowing what training data influenced your AI-generated track will become as important as knowing your mix engineer's credentials.

The gold rush might not be over, but the prospectors are now required to file permits. And in an industry built on intellectual property, that's probably a good thing.

Sources

Sony AI: CLEWS / defensible AI research context — https://ai.sony/blog/Sony-AI-at-ICML-Sharing-New-Approaches-to-Reinforcement-Learning-Generative-Modeling-and-Defensible-AI/

Sony AI (code): CLEWS repository — https://github.com/sony/clews

Research paper (arXiv): “Supervised Contrastive Learning from Weakly-Labeled Audio Segments for Musical Version Matching” — https://arxiv.org/abs/2502.16936

Music Business Worldwide: Deezer reporting on AI-generated uploads vs. stream share (0.5% of streams) — https://www.musicbusinessworldwide.com/nearly-a-third-of-all-tracks-uploaded-to-deezer-are-now-fully-ai-generated-says-platform/

The AI Musicpreneur: “The Great AI Music Flood…” — https://www.aimusicpreneur.com/ai-music-news/ai-generated-music-deezer-music-streaming-platform/